As I’ve been posting some photos of decapped ICs lately, I thought I’d share the process I use personally for those that might want to give it a go 😉

The usual method for removing the epoxy package from the silicon is to use hot, concentrated Nitric Acid. Besides the obvious risks of having hot acids around, the decomposition products of the acid, namely NO² (Nitrogen Dioxide) & NO (Nitrogen Oxide), are toxic and corrosive. So until I can get the required fume hood together to make sure I’m not going to corrode the place away, I’ll leave this process to proper labs ;).

The method I use is heat based, using a Propane torch to destroy the epoxy package, without damaging the Silicon die too much.

I start off, obviously, with a desoldered IC, the one above an old audio DSP from TI. I usually desolder en-masse for this with a heat gun, stripping the entire board in one go.

Next is to apply the torch to the IC. A bit of practice is required here to get the heat level & time exactly right, overheating will cause the die to oxidize & blacken or residual epoxy to stick to the surface.

I usually apply the torch until the package just about stops emitting it’s own yellow flames, meaning the epoxy is almost completely burned away. I also keep the torch flame away from the centre of the IC, where the die is located.

Breathing the fumes from this process isn’t recommended, no doubt besides the obvious soot, the burning plastic will be emitting many compounds not brilliant for Human health!

Once the IC is roasted to taste, it’s quenched in cold water for a few seconds. Sometimes this causes such a high thermal shock that the leadframe cracks off the epoxy around the die perfectly.

Now that the epoxy has been destroyed, it breaks apart easily, and is picked away until I uncover the die itself. (It’s the silver bit in the middle of the left half). The heat from the torch usually destroys the Silver epoxy holding the die to the leadframe, and can be removed easily from the remaining package.

BGA packages are usually the easiest to decap, flip-chip packages are a total pain due to the solder balls being on the front side of the die, I haven’t managed to get a good result here yet, I’ll probably need to chemically remove the first layer of the die to get at the interesting bits 😉

Once the die has been rinsed in clean water & inspected, it’s mounted on a glass microscope slide with a small spot of Cyanoacrylate glue to make handling easier.

Some dies require some cleaning after decapping, for this I use 99% Isopropanol & 99% Acetone, on the end of a cotton bud. Any residual epoxy flakes or oxide stuck to the die can be relatively easily removed with a fingernail – turns out fingernails are hard enough to remove the contamination, but not hard enough to damage the die features.

Once cleaning is complete, the slide is marked with the die identification, and the photographing can begin.

Microscope Mods

I had bought a cheap eBay USB microscope to get started, as I can’t currently afford a proper metallurgical microscope, but I found the resolution of 640×480 very poor. Some modification was required!

I’ve removed the original sensor board from the back of the optics assembly & attached a Raspberry Pi camera board. The ring that held the original sensor board has been cut down to a minimum, as the Pi camera PCB is slightly too big to fit inside.

The stock ring of LEDs is run direct from the 3.3v power rail on the camera, through a 4.7Ω resistor, for ~80mA. I also added a 1000µF capacitor across the 3.3v supply to compensate a bit for the long cable – when a frame is captured the power draw of the camera increases & causes a bit of voltage drop.

The stock lens was removed from the Pi camera module by careful use of a razor blade – being too rough here *WILL* damage the sensor die or the gold bond wires, which are very close to the edge of the lens housing, so be gentle!

The existing mount for the microscope is pretty poor, so I’ve used a couple of surplus ceramic ring magnets as a better base, this also gives me the option of raising or lowering the base by adding or removing magnets.

To get more length between the Pi & the camera, I bought a 1-meter cable extension kit from Pi-Cables over at eBay, cables this long *definitely* require shielding in my space, which is a pretty aggressive RF environment, or interference appears on the display. Not surprising considering the high data rates the cable carries.

The FFC interface is hot-glued to the back of the microscope mount for stability, for handheld use the FFC is pretty flexible & doesn’t apply any force to the scope.

Die Photography

Since I modified the scope with a Raspberry Pi camera module, everything is done through the Pi itself, and the raspistill command.

The command I’m currently using to capture the images is:

raspistill -ex auto -awb auto -mm matrix -br 62 -q 100 -vf -hf -f -t 0 -k -v -o CHIPNAME_%03d.jpg

This command waits between each frame for the ENTER key to be pressed, allowing me to position the scope between shots. Pi control & file transfer is done via SSH, while I use the 7″ touch LCD as a viewfinder.

The direct overhead illumination provided by the stock ring of LEDs isn’t ideal for some die shots, so I’m planning on fitting some off-centre LEDs to improve the resulting images.

Image Processing

Obviously I can’t get an ultra-high resolution image with a single shot, due to the focal length, so I have to take many shots (30-180 per die), and stitch them together into a single image.

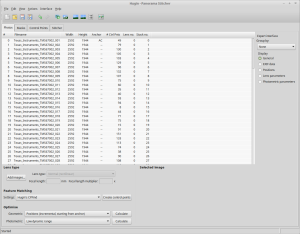

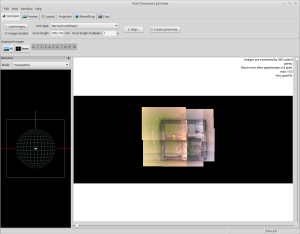

For this I use Hugin, an open-source panorama photo stitching package.

Here’s Hugin with the photos loaded in from the Raspberry Pi. To start with I use Hugin’s built in CPFind to process the images for control points. The trick with getting good control points is making sure the images have a high level of overlap, between 50-80%, this way the software doesn’t get confused & stick the images together incorrectly.

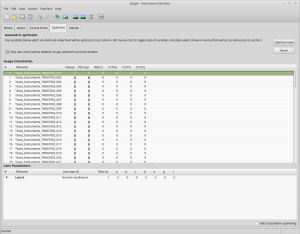

After the control points are generated, which for a large number of high resolution images can take some time, I run the optimiser with only Yaw & Pitch selected for all images.

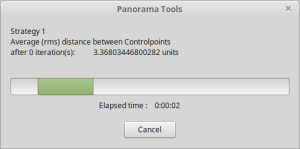

If all goes well, the resulting optimisation will get the distance between control points to less than 0.3 pixels.

After the control points & optimisation is done, the resulting image can be previewed before generation.

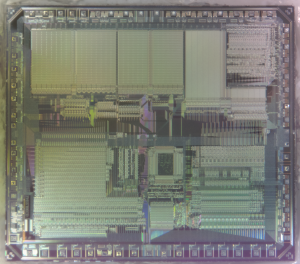

After all the image processing, the resulting die image should look something like the above, with no noticeable gaps.